|

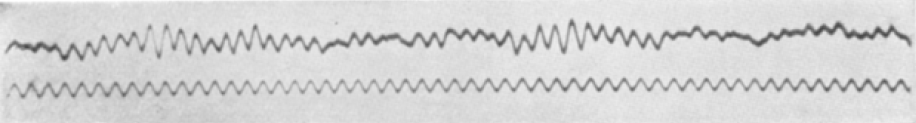

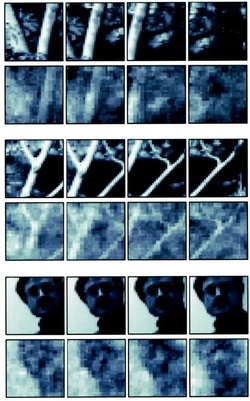

by Jennifer Maccani, PhD This article is part of the "Emerging Biotechnology" series. Is telepathic communication possible? As outlandish as it might sound, this question drove Hans Berger to investigate the electrochemical basis of brain activity—a line of research that eventually led to the invention of the electroencephalogram, or EEG, which measures the brain’s electrical impulses via electrodes attached to a person’s scalp (1). We can find clues as to what led Berger down this path by studying his early life. Berger was born in 1873 in Coburg, Germany. His diaries reveal that he was an introspective and solitary young man. After a short stint at the University of Berlin, Berger turned away from early leanings toward a career in astronomy and enlisted for military service in Würzburg, where a near-death experience radically altered his aspirations. One morning in 1892, Berger’s horse became spooked during an exercise with his artillery unit. Berger was thrown to the ground and into the path of an oncoming artillery cannon’s wheel. Although the cannon stopped just short of crushing him, Berger was thoroughly rattled. When his family sent him a telegram that evening inquiring about his wellbeing, they revealed that his sister had that very morning feared that something had happened to her brother. Berger became convinced that “it was a case of spontaneous telepathy in which at a time of mortal danger I transmitted my thoughts” (2). It may have been this very experience that led him, upon the completion of his military service, to attend Jena University in Jena, Germany and pursue his research on the electrical activity of the brain (2-4).  "In Germany I am not so famous." [image via] The brain itself functions much like a computer. Electrochemical impulses from the axon of one neuron to the dendrite of the next transmit information that the nervous system can translate into actions (3, 5). These electrical impulses can travel as fast as 250 miles per hour! (5, 6) But is it possible to design and build a machine capable of deciphering these data and harnessing them—rather than simply recording them? As it turns out, it’s far from impossible. Hans Berger’s EEG is the earliest example of what have come to be known as brain-computer interfaces (BCIs) (5), machines capable of detecting and interpreting brain activity (5). But the EEG, though a breakthrough technology, could not translate the brain’s electrical impulses into action, for example by taking in brain signals and controlling a machine to produce corresponding movements. Neuroscientists continued to investigate a way to interpret the brain’s signals into meaningful outputs throughout the twentieth century.  One of the first EEG recordings. Top: EEG waveform; bottom: 10 Hz reference frequency. [image via] Then, in the 1980s, Apostolos Georgopoulos at Johns Hopkins University discovered a mathematical algorithm based on the cosine function that could reconstruct movement signals from the electrical impulses of motor cortex neurons in rhesus macaque monkeys (7). This major finding made it possible for a computer to interpret brain signals, amplify them, and control a machine to reproduce the movements intended by the brain. In other words, the algorithm made it possible to build BCIs capable of translating input from motor neurons into actions performed by a machine, even if a person’s body is incapable of actually performing those actions. This idea had far-reaching implications for many groups, including individuals with any of a whole host of sensory handicaps and particularly people with physical disabilities. Visual input was the first kind of sensory information a BCI could reconstruct. In 1999, Phillip Kennedy and Yang Dan at the University of California-Berkeley embedded electrodes into the thalamus of a cat in an area called the lateral geniculate nucleus, which receives signals from the retina. As the neurons in this region fired, the research team saw what the cat was seeing, and were able to create movies from the cat’s sensory output (8). In a human application of this type of technology, Avery Laboratories (now Avery Biomedical Devices), led by William Dobelle, pioneered a BCI in 2002 that allowed a man, who had been blind since the 1970s, to see and do tasks on his own that would have been very difficult without the benefit of vision (9).  Top rows: actual movie frames. Bottom rows: corresponding frames recreated from the cat's brain. [image via] BCI research exploded after the year 2000, when Miguel Nicolelis at Duke University demonstrated the successful use of a BCI which allowed a monkey to operate a robotic arm directed by the monkey’s motor neurons (10). Shortly thereafter, a group at Brown University led by John Donoghue demonstrated a similar concept but took it a step further, showing that the BCI could allow the monkey to actually feed itself using a robotic arm—and using information from far fewer neurons, to boot: Donoghue’s BCI only required the input of 7-30 neurons, whereas Nicolelis’ required input from 50-200 neurons (13, 14). Clearly, Donoghue’s group had taken BCI technology to an advanced level, and they continued to pursue their research with the creation of BrainGate, a brain implant system owned by Donoghue’s company Cyberkinetics. BrainGate is designed to actuate movement intentions for people who lack control over their limbs, such as those with full paralysis and other severe physical disabilities (15). This technology is currently undergoing clinical trials for individuals with “cervical spinal cord injury, brainstem stroke, muscular dystrophy, or amyotrophic lateral sclerosis (ALS) or other motor neuron diseases” (16). Dr. Donoghue could not be reached for comment, although much of his groundbreaking work speaks for itself. For example, the video below shones one BrainGate participant, Cathy Hutchinson, using the interface to take a drink unaided for the first time in 15 years. In short, BrainGate translates a person’s thoughts and intentions into movement, even when their own body is incapable of doing so. Of course, even the most cursory review of BCI research brings up a host of other potential applications. At Duke University, Miguel Nicolelis’ team has created the first brain-to-brain interface that allows direct communication of thoughts between two creatures—in this case, rats (17, 18). Though this technology has yet to be tested in humans, this type of “synthetic telepathy” or “silent communication” research is of great interest to the United States Army, which has offered grants to researchers hoping to make progress in this area (19). A burgeoning market has formed in tandem for these technologies, with many companies competing with Cyberkinetics or taking BCIs into different directions. These companies include Starlab (20) and Neuroelectrics (21), which develop wireless EEG and neurostimulator technologies. In the gaming industry, BCI technologies are currently available from Emotiv Systems, NeuroSky, and Interaxon (22). NeuroSky’s Bluetooth-enabled headset-based BCI allows users to play computer and smartphone games using only their minds, including a zombie-chasing game (22, 23). Emotiv’s headset enables users to play Tetris-style puzzle games and search Flickr photos by emotional keywords (22, 24). Taking a different tack, Interaxon’s “Muse” wireless headband allows users to exercise their powers of concentration with an app that focuses users on specific aspects of a screen (22, 25). Samsung’s Emerging Technology Lab is developing a tablet users can control with their brain activity, which is monitored by an electrode-studded cap (22, 26). Other companies, such as intendiX (27) and Mind Solutions, Inc. (28), have focused on reading and spelling applications whereby a user need only think words, which the computer then spells out. These applications hold particular utility for people who cannot speak or otherwise communicate, such as those with full paralysis (22). As exciting as BCI and brain-to-brain communication technologies are, a myriad of ethical issues surround their development and human use. Foremost among these issues is what exactly constitutes a BCI? Some have posited that a BCI can be as simple as a computer capable of detecting the brain’s electrical impulses, such as an EEG, while others consider the use of a machine or other device to turn those impulses into action to be critical to the definition of a BCI. Even with that decided, additional issues emerge. In clinical trials of BCI technologies for individuals incapable of speaking for themselves, how should informed consent be obtained? Potential risks and benefits must be explained to study subjects, as well as potential side effects. Unfortunately, even when informed consent can be obtained, serious complications exist for any brain implantation surgery, including scarring of the brain (5).

Moreover, if, and when, the interface makes an error in the interpretation of user speech or movement, who is responsible for the unintended action, the user—or the machine? This is providing, of course, that BCI and brain-to-brain technologies currently only tested in animals are actually applicable to humans, as many animal models are known to have major differences from humans that limit generalizability, especially in neuroscience research (29). Even if they are generalizable, what are the implications for mind reading, once only a science fiction writer’s daydream? What about mind-control? Since the experiments that allowed a monkey to control a robot arm, a group of researchers at Harvard that included Dr. Ziv Williams were able to implant a chip in a monkey’s brain that allowed the monkey to control the limbs of another, sedated monkey to move a joystick (11, 12). Of course, researchers urge caution in interpreting these experiments to mean that a person could control the body of another, and that this technology will have implications for helping those with paralysis overcome their disabilities. Yet, in the wrong hands, could such technology also be dangerous? Where does technology begin and our privacy end? Is it acceptable to use BCI technologies for interrogation? These questions are particularly poignant given government interest in such projects (30), including DARPA’s “Silent Talk” initiative to investigate brain-to-brain interfaces for soldiers (31). Perhaps Hans Berger was onto something after all in his musings on telepathy, even if he was over a century early to the party. How the implications of his hunch will play out, however, remain to be seen.

1 Comment

5/20/2015 02:53:16 am

Wow - great article. This is the first time I've seen all these concepts explained in a way that can be easily understood! Thank you,

Reply

Leave a Reply. |