|

Thank you for following the launch of Ursa Sapiens!

We will resume publishing content during the spring semester. If you have any questions, comments, or suggestions, please drop us a line at brown.epub[at]thetriplehelix.org! Happy holidays! the Ursa team

0 Comments

by Joseph Frankel '16

Purple brain + organic shapes = folk psychology? [image via]

A month into the semester, a close friend revealed to me her firm belief in the Myers-Briggs Type Indicator (MBTI). Sitting me down in front of her laptop, she directed me to an online version of the exam. About five minutes and 72 yes-or-no questions later, the test revealed that I am an ENFP, that I am more Extroverted than Introverted, more Intuitive than Sensory, more Feeling than Thinking, and more Perceiving than Judgmental.

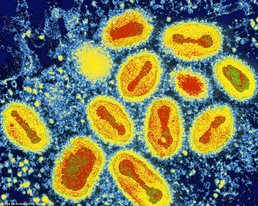

Immediately, I searched for information to help me interpret these findings. I found websites full of neat little graphics along with bit descriptions of each personality of the 16 personality types “in a nutshell.” Thus began my introduction to the cult of the MBTI. Used by over 10,000 companies, 2500 colleges, and 200 government agencies, the MBTI is the most widely used personality test in the world. Created during World War II by amateur psychologist Isabel Briggs Myers and her mother Katharine Briggs, the test’s initial purpose was to help women entering the workforce to replace men who’d gone to war determine in what line of work they’d be “most effective and comfortable” (1). Myers and her mother were avid followers of the work of Swiss psychologist Carl Jung and based the test on his theory of types, that people would tend towards one or another style of interacting with the world along several different axes. In years since, it has ballooned into the go-to metric for human resource departments throughout the world in determining job placement, building teams, and career and academic advising. by Denise Croote '16  Not your mama's SpaghettiOs. [image via] Not your mama's SpaghettiOs. [image via] It seems as though the Mayans made a slight miscalculation. In 2012 we narrowly escaped a meteor storm and a black hole to live another day, but we’re definitely not free from danger. Certain dangers we can see and fear, like tornadoes and sandstorms, but other hazards are far less evident. Viruses are very good at slipping under our radar, mainly because we can’t see them, and only become aware of their presence when they infect our population. Smallpox, an infectious disease responsible for an estimated 300 to 500 million deaths in the 20th century, might just be back to haunt us (1). When the WHO organization announced the universal eradication of smallpox in 1980, smallpox vaccinations were discontinued in the United States and several other industrialized countries. By 1985, routine vaccinations were eliminated across the globe. This act reduced health care costs, but left us with a population susceptible to a smallpox outbreak (1). If the smallpox virus were to fall into the wrong hands, it would be a deadly bio-terror weapon. Our vulnerable population would guarantee the rapid propagation of the disease and to this date there is no known cure. Smallpox begins with a simple fever and quickly proceeds to a systemic rash. The rash develops into firm pustules that eventually crust over and scar. Approximately 30% of those who are infected with smallpox die from the disease. This would undoubtedly result in mass sickness and widespread panic if ever released in the United States (2). by Katie Han '17  Apple has your fingerprints too. [image via] Apple has your fingerprints too. [image via] Last September, Apple Inc. released its long-awaited next generation smartphone, the iPhone 5S. While I was reading the live updates of the product-launching event alone in my bed, I squealed. Fingerprint-recognizing Touch ID? Champagne gold color? At first, it seemed like Apple had successfully brought us a brand new, high-tech device with a bit of luxury thrown in. However, there was one thing that bothered me: the familiar “S” at the end of its name. That single letter implied that the “new” phone has the exact same design as its predecessor, the iPhone 5, with slight improvements in its functions. Granted, the phone has the most recent and advanced hardware and an impressive camera, but those features were not enough to keep me dazzled for long. When my closest friend Kat’s iPhone 5S finally arrived a whole month after reservation, I was the first one to rush to her room and play with the new gadget. Yet, after a few tries with the fingerprint recognition, I had lost interest, realizing that the rest was essentially the same as my iPhone 5.  iOS vs. Android market share by country. [image via] iOS vs. Android market share by country. [image via] The invention of iPhone has revolutionized our everyday lifestyle in the past decade. The way we interact with each other has dramatically changed, as it is perfectly normal to see people staring into their phones on the street, in cafes, and even while they are talking with others in real life. Social networking services such as Facebook and Twitter allow instant access to any information in the world. Of course, the question of whether it has been for the better or for the worse remains debatable. Moreover, our attachment to our phones extends beyond Internet connection. I admit – I often replace the question “Do you know the time?” with “Do you have your phone?” Perhaps because the first iPhone was such a revelation, users have over time grown to expect more and more from the new iPhones, especially with the advancements of rival smartphones. In particular, the Samsung Galaxy series based on Android is expanding in the market at an incredible rate, as Samsung’s global smartphone market share in the third quarter of 2013 topped 32%. Meanwhile, its latest model, Galaxy S4, broke the record for the best-selling smartphone in Samsung’s history. With their bio-sensitive technology such as eye tracking, Samsung smartphones pose a significant threat to the number one brand in the world. Apple is definitely aware of these challenges – its recent products reflect that they have learned from their competition. The completely transformed iOS 7 has been accused of resembling Android. Examples of the similarities include the Control Center with shortcuts to Wi-Fi and Bluetooth and the Multitasking page with previews of the applications.  His face says it all. [image via] Despite the seemingly slow progress due to high expectations, the iPhone 5S is by far the fastest selling smartphone, as nine million units were sold over the first weekend, compared to five million units of the iPhone 5 in its first weekend last year. Walt Mossberg of All Things Digital praised the phone by calling it “the best smartphone on the market." As an Apple user, I wholeheartedly agree with this label; the quickly responding and well-connected device is invaluable in daily life. Without iMessage, my friends and I would not be able to find each other after class, and without Music, we would not have the spontaneous background music. Every weekend, Facetime provides me with a very tangible connection with my parents who live halfway around the world. Most importantly, three weeks ago, if I had not used the Bank of America App, I would not have found out so quickly that my credit card was stolen and being used, and situations may have turned out far worse.

The climate for Apple seems to be clear for now; however, with the turbulence in the general smartphone business, the future is unpredictable. by Matthew Lee '15  He knew what was up. [image via] From the standpoint of daily life, however, there is one thing we do know: that we are here for the sake of each other - above all for those upon whose smile and well-being our own happiness depends, and also for the countless unknown souls with whose fate we are connected by a bond of sympathy. Many times a day I realize how much my own outer and inner life is built upon the labors of my fellow men, both living and dead, and how earnestly I must exert myself in order to give in return as much as I have received. This semester is coming to an end. Fittingly, Providence is steeped in snow. For some, it is already over. The rest of us have exams to take and papers to write.

Yet we will all wonder where three and a half months went. I hope that you can say it was spent laughing with friends. I hope you can say you that you climbed mountains and reached new plateaus with the people you care about. I hope you can say that you made a smile happen everywhere you went. At the top of this post, Einstein reminds us that we are all interconnected and that our lives are only as meaningful as they are to the people we touch. I am humbled. Far too often have I staked out on my own, inconsiderate and inconsiderate of the notion that I am the sum of my interpersonal interactions. I have wasted so much time this semester because I have not shared myself. When all is said and done, thoughts that were neither shared nor realized into actions will not have impacted another individual. They will not have mattered. I hope you can say that you were not like me. In the midst of studying, of paper writing, do not forget that we are all in this -- finals period, Brown, winter in Providence -- together. Make a smile happen. Support others in their struggles and show your gratitude to those who support you. Make time to say goodbye to friends. By the end of the week, it'll be over. "Once more unto the breach, dear friends, once more." by Connor Lynch '17

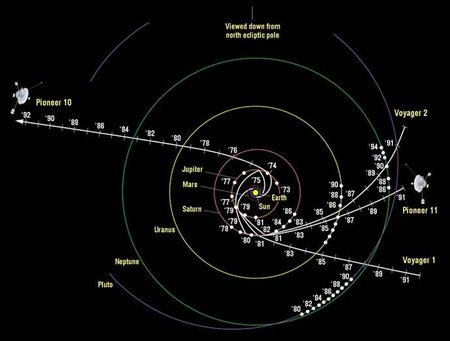

A spacetimeline of the Voyager and Pioneer missions. [image via]

The tireless Voyager I spacecraft, launched in the disco era (1977 to be exact) and now more than 11 billion miles from Earth, has become the first man-made object to enter interstellar space, NASA announced on September 12, 2013 (1). Interstellar space, cosmologists now know with certainty, is dense with particles, and hisses at a certain frequency.

There now seems to be enough incontrovertible evidence that NASA’s Voyager I has crossed into a realm where no spacecraft has gone before. Scientists have long thought that there would be a boundary somewhere out there where the million-mile-per-hour “solar wind” of particles would give way abruptly to cooler, denser interstellar space, permeated by charged particles from around the galaxy. That boundary, called the heliopause, turns out to be 11.3 billion miles from the sun, according to Voyager’s instruments and NASA’s calculations. As of the writing of this article (November 3, 2013), the Voyager 1 is about 18,939,000,000 km from the Earth and the second Voyager about 15,479,000,000 km from the Earth. These spacecraft are moving at speeds of over 20 km/s and are powered by Radioisotope Thermoelectric Generators (RTG), which are fueled by a source of naturally decaying plutonium (2). by Layla Kazemi This article was written by a student at the Wheeler School. Brown's chapter of The Triple Helix collaborates with the Wheeler School to engage high school students in science journalism. Does life exist elsewhere in the universe? Could life ever exist in the future and has it in the past? The possibility of other life in the universe has been speculated by the human race for centuries and is one of the most excogitated questions that humans face. Mars has been the focus of much of the research to answer this question because of the planet’s proximity to Earth, and although exploration of the planet began over half a century ago, the possibility of life on Mars is still as pertinent today as it was decades ago.  "I hope Martians are friendly." [image via] "I hope Martians are friendly." [image via] For the past couple of years, NASA has been working on a car-sized robotic rover named Curiosity to explore the Gale Crater on Mars. The rover successfully landed on Mars aboard the Mars Science Laboratory (MSL) spacecraft on August 6, 2012 and will spend a Martian year (687 days) exploring the planet. The goal of this project is to investigate and assess whether the area has ever had or still has environmental conditions favorable to life (1). So what exactly is necessary for a planet to be considered habitable? According to NASA, there are three conditions that are crucial for life to exist: liquid water, other chemical ingredients utilized by life and a source of energy (1). To search for the existence of these conditions the rover is using a strategy that NASA Mars exploration has used for years: following water (1). Since every environment on Earth containing liquid water sustains microbial life, this strategy makes the most sense. Researchers believe that the Gale Crater, where the rover landed and where it is conducting its research, was wet at some point (1). The exact location within the crater where Curiosity landed is near the foot of a layered mountain named Mount Sharp, which contains minerals that form in water and may preserve organics (1). This was determined thanks to five years of research by NASA’s Mars Reconnaissance Orbiter prior to the launch of Curiosity, a mission that evaluated 30 potential Martian locations for landing (1). by Amy Butcher '17 It’s 2013 and social media is all over the place. Words like “blogosphere”, “twittersphere”, and “[insert social medium here]sphere” that used to sound so awkward coming from the mouths of news broadcasters are now daily occurrences. As for science’s relationship with social media, at first glance the pervasiveness of high-speed communication seems fantastic for scientists and science lovers alike. Surely social media must be good for improving scientific literacy- more ways to share and access information mean a more informed and perhaps even more enthused public, right? Anyone who was online when Curiosity landed on Mars could see firsthand social media’s role in stirring up scientific awe and inspiration- people were truly wrapped up in the story, across nearly every social media platform. Hooray! There is, however, a darker and more insidious trend in social media which counteracts genuine scientific communication online…

by Tiffany Citra '17  1 league = 3.452 mi = 5.556 km [image via] 1 league = 3.452 mi = 5.556 km [image via] Often regarded as the father of science fiction, Jules Verne has written a lot of work involving science and technology far ahead of its era. But how exactly accurate is the science portrayed on his writing? Let’s examine the credibility of the assertions Verne makes in one of his most notable books, 20,000 Leagues Under the Sea. The story starts with Professor Pierre Aronnax, who embarks on a mission to hunt down a mysterious “giant narwhal”. However, when he finally comes in contact with his target, his ship can’t resist the “narwhals” strength and eventually falls apart. Luckily, he survives and discovers that the “narwhal” is in fact a submarine. Upon discovering his presence, Nemo – the captain of the submarine, which is known as the Nautilus – brings him into the vessel. Despite being kept as a prisoner, Professor Aronnax is taken on a journey across the sea to explore things not yet discovered by mankind. So how about the science? by Denise Croote '16 Believe it or not, cannibalism isn’t a thing of the distant past. As recent as the 1950s, villagers on the island of New Guinea actively practiced cannibalism. After the death of a loved one, relatives gathered to bless and consume the body. The women and children led the ceremony, while the males refrained from partaking in the feast. Interestingly, the women and children started to develop an unrecognized neurological disorder, while the males did not. Kuru, as they called it, was a disorder in which the infected individual shook, failed to complete simple motor commands, and refused nourishment. The natives claimed the spirits sent Kuru to them as a punishment, but Michael Alpers, an Australian medical researcher, wasn’t convinced. Dedicating his entire life to investigating Kuru, Alpers made a shocking discovery that turned our understanding of diseases upside down. Could a neurological disorder really be contagious? And could cannibalism fuel its transmission? To learn more about Alpers and his work, watch the documentary Kuru: The Science and the Sorcery below, or check out the article "The Last Laughing Death" at The Global Mail. |